By Vincent Tran – IMT Solution Architect

After successfully implementing the IBM InfoSphere Master Data Management solution, our customers start to use the master data for person identification and matching across the enterprise. This is typically coupled with adding new types of data sources that contribute to their master data sets. But that can make linking more challenging, as each source varies in completeness and correctness.

To help with this challenge, we help our customers enrich their data – both in real time and via batch – so they can improve match quality and deliver additional business value across the entire enterprise.

Let’s look at what’s involved in data enrichment and how you can get started.

What data is most often enriched?

In healthcare, the most requested data enrichment requests – by far – are patient and guarantor addresses and healthcare provider contact information and credentials.

Organizations that deal with vendors and suppliers help keep the data current by enriching that information from sources such as Dun & Bradstreet.

What data is available for data enrichment?

There are a lot of external data sources available online to consume, with more appearing all the time.

Some commercial data sources are:

- Dun & Bradstreet for organizational data

- National Change of Address to update your addresses

- Google Maps API to standardize addresses

- IBM InfoSphere Quality Stage Address Verification

Other public data sources and open source libraries are also available:

- NPPES NPI Provider Registry from CMS

- OIG List of Excluded Individuals/Entities from federally funded health programs

- Open Street Maps to standardize addresses

Many of the sources above assist with verification and correction of address data. Utilizing better address data is a trending topic this year and provides a quick win to improve data quality linkages. And that’s only a sample.

While USPS data cannot be currently used for healthcare data, there’s talk of changing that. In fact, Pew Charitable Trusts wrote a letter to the National Coordinator for Health Information Technology that states:

Research has shown that standardizing specific data elements can improve match rates. Use of the U.S. Postal Service (USPS) format for address (which indicates, for example, appropriate street suffixes) can improve the accuracy of matching records by approximately 3%,which could result in tens of thousands of additional correct record linkages per day. An organization with a match rate of 85%, for example, could see its unlinked records reduced by 20% with standardization of address alone.

My team has ample experience with several Data Enrichment implementation patterns for MDM SE and can advise you on best practices depending on your specific needs. Typically, we start from one of two perspectives – but that can vary with your needs.

Option 1: Load external data to MDM as new sources

The most straightforward option is to simply add the external data as a new source in your MDM implementation. MDM uses these records to probabilistically match and link the external data to your existing data, then augments your composite views with the extra information needed. However, if your external data set consists of the entire US population, you might risk increased licensing costs by adding a large number of new records that may be geographically irrelevant. One solution is to only load data from your state, but a more advanced way is to load only records that match your existing source records. Identifying which records to load can be done through MDM bulk cross-match jobs or using the real-time APIs to search for matches.

Example:

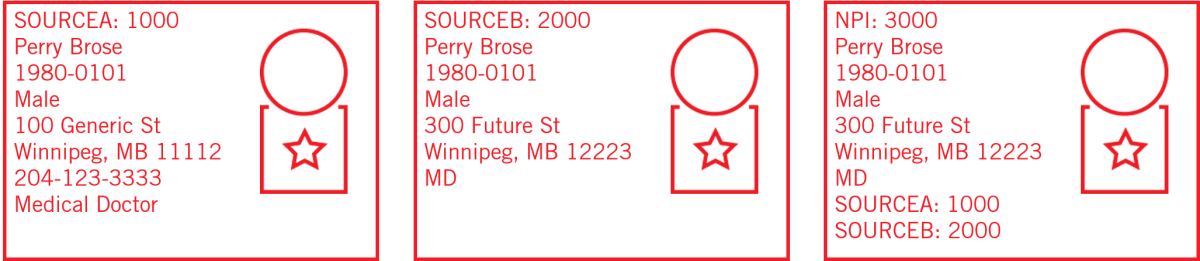

In the above Provider data example, SOURCEA:1000 and SOURCEB:2000 do not score high enough to link because they only have Name and DOB in common. It is only when we include the NPI Registry record that knows of both source identifiers that the records transitively link together.

Option 2: Standardize and load new attributes, but retain original source values

Another data enrichment pattern is to augment your existing source records with just new attributes. Note that it is always best practice to retain the original source attribute values from the contributing source systems for data lineage and stewardship.

A common example is to create a new standardized address attribute in MDM and store the standardized address calculated by using the external API services or libraries so that you can retain both values. If your address allows for multiple active values, then we create a new implementation-defined segment that contains both the original source field values and the standardized field values. The enriched attributes or fields can contribute to the algorithm and composite views, while you retain the original source values.

For example, let’s say you have two addresses:

| Street Line 1 | Street Line 2 | City | State | Postal Code |

| 100 Generic St. | PO BOX 234 | Winnipeg | MB | 11112 |

| Street Line 1 | Street Line 2 | City | State | Postal Code |

| 234-100 Generic Street | Leave at Front Door | Winnipeg | Manitoba | 11112 |

MDM will not give a high address match score due to the many differences. However, you can clearly see they are the same address. Using software or APIs to standardize and verify the address will improve the address match score and provide a standardized view of the addresses for downstream consumers.

| Street Line 1 | Street Line 2 | City | State | Postal Code |

| 100 Generic Street | P.O. Box 234 | Winnipeg | MB | 11112-3322 |

Example: Data enrichment in action

We have worked with several provider implementations that wanted to augment their provider sources with information available from the state provider registry and the national NPI provider registry.

For the state provider registry, this is relatively easy: we simply batch load the state registry records as a new source.

At one southeastern US organization, we determined that loading all of the data from the National Plan and Provider Enumeration System (NPPES) would exceed the client’s licensing limit. To solve this, we processed the weekly NPI provider registry files and used the MDM Java APIs to search and load only the provider records that scored above the clerical review threshold.

Start implementing data enrichment with technologies you currently have

You can retrieve or integrate with your external data sources by using two IBM products designed to use with IBM MDM SE: IBM Data Stage and IBM Integration Bus. IBM Data Stage is good for extracts. IBM Integration Bus is good for both extracts and real-time integrations. At IMT, we also use Apache Camel, which is an open source integration framework that is lightweight and powerful.

Another option in your data enrichment toolkit in an IBM MDM handler. A handler is enables real-time data enrichment that can be executed before or after any interaction in the MDM Engine. One advantage to this approach is that data enrichment actions can be invoked as soon as changes to your MDM data occur, whether from integrated web apps like Inspector, or from changes in your source systems.

Intrigued by the idea of data enrichment but not sure where to start? We can help! Check out this solution brief or contact me directly at vincent@imt.ca